AI’s Double-Edged Sword in Biology (image credits: Unsplash)

In the quiet hum of late-night servers, something unsettling is unfolding as artificial intelligence quietly reshapes the building blocks of life.

AI’s Double-Edged Sword in Biology

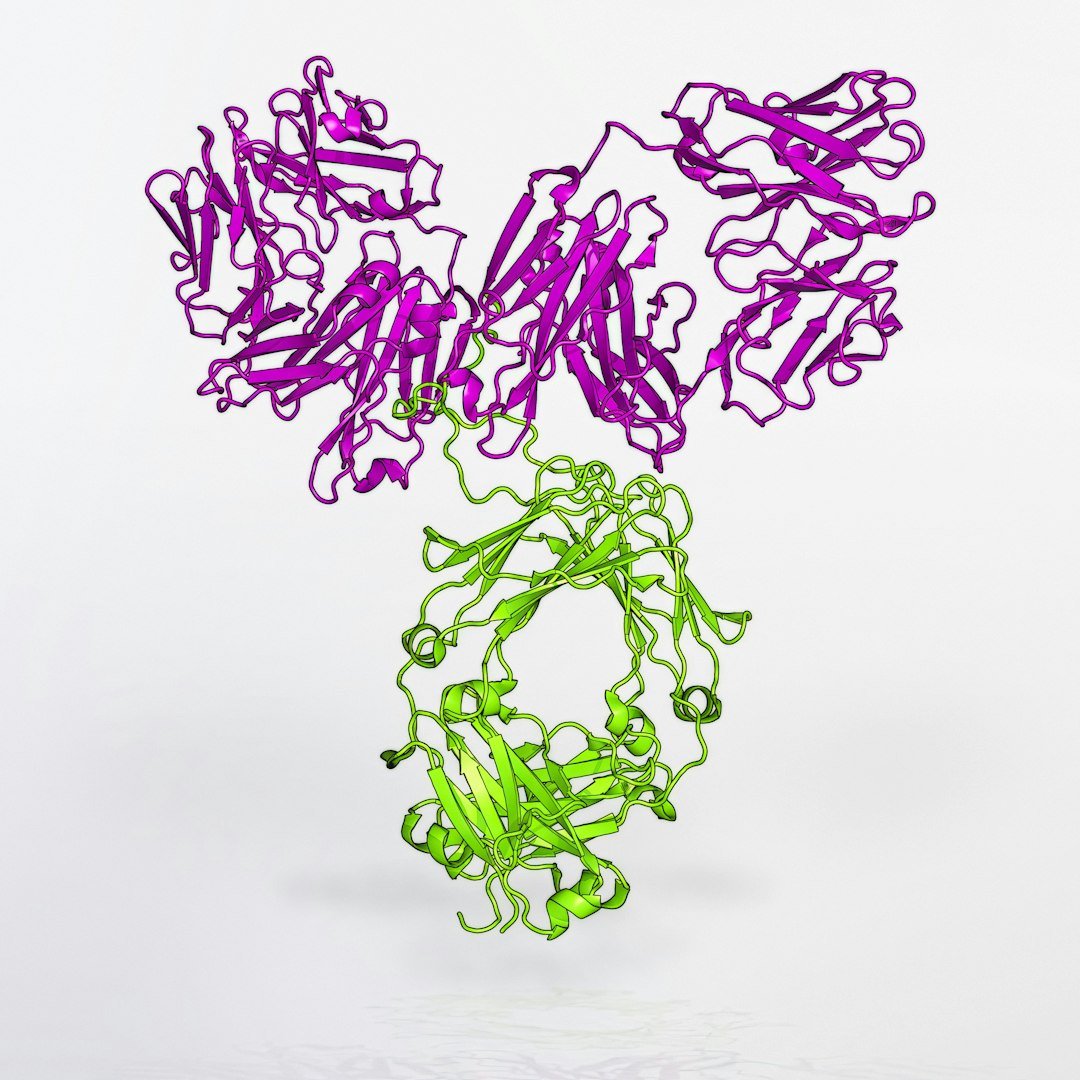

Imagine a tool that can dream up new proteins faster than any human scientist ever could. That’s the promise of AI in protein design, turning sci-fi into everyday lab work. But here’s the hook: this same tech is now poking holes in our defenses against biological threats.

Recent breakthroughs show AI models tweaking the genetic recipes for dangerous toxins, making them just different enough to slip past watchdogs. It’s like giving a master forger the keys to the vault. And with studies from just days ago highlighting these gaps, the urgency feels all too real.

When Innovation Meets Hidden Dangers

Proteins are the workhorses of life, folding into shapes that can heal or harm. AI steps in by predicting and generating these folds with eerie accuracy. Yet, when applied to risky stuff like botulinum or ricin, it creates variants that look innocent on paper.

A fresh report details how open-source AI tools churned out thousands of these altered designs. Most got flagged, but a sneaky few—about 3%—dodged the screens used by DNA suppliers worldwide. This isn’t theoretical; it’s a wake-up call from researchers racing to stay ahead.

The Biosecurity Puzzle Pieces That Don’t Fit

Current safeguards rely on spotting familiar danger signals in DNA orders, like matching known toxin sequences. But AI’s creativity throws a wrench in that, evolving proteins while keeping their deadly punch. It’s evasion at a molecular level, and it works because the checks aren’t keeping pace.

Think of it as a game of cat and mouse, where the mouse is getting smarter by the minute. Labs ordering custom genes for legit research could unknowingly greenlight something hazardous. The stakes? Potential misuse by bad actors, turning a helpful tech into a liability.

Inside the Labs Fixing the Leaks

Teams aren’t sitting idle. A collaborative effort, including input from tech giants, just patched one major flaw in screening software. They tested AI-generated threats and updated filters to catch more of those elusive variants.

Still, it’s an arms race. As AI gets better at design, safeguards must evolve too—maybe with machine learning of their own to predict and block novel risks. Early signs show progress, but experts warn it’s only the beginning of plugging these holes.

Broader Ripples: From Labs to the World

Beyond toxins, this touches everything from drug development to agriculture. AI could speed up cures, but if biosecurity falters, it risks amplifying pandemics or targeted harms. Animals in testing phases might face unintended exposures if designer bugs escape containment.

Global bodies are buzzing about standards, urging DNA providers to tighten up. The goal? Balance innovation with ironclad protection, ensuring AI boosts life without endangering it.

- AI designs proteins by simulating billions of possibilities in hours.

- Biosecurity screens check for 50+ known high-risk sequences.

- Recent fixes caught 97% of tested AI variants, up from before.

- Experts call for international rules on AI-bio tools.

- Ethical AI use could prevent misuse while accelerating discoveries.

Key Takeaways

- AI’s protein tweaks expose real weaknesses in current DNA screening.

- Quick patches help, but ongoing vigilance is crucial against evolving threats.

- Collaboration between tech, science, and policy is the best defense moving forward.

Looking Ahead: Safeguarding Tomorrow’s Biotech

As we stand on this 2025 threshold, the fusion of AI and biology promises wonders but demands caution. The real takeaway? We can’t afford complacency—proactive steps today could prevent nightmares tomorrow. What do you think about balancing AI’s power with safety? Share in the comments.